Reinforcement Learning for Quantum Control

University of Oslo — Bachelor's Thesis

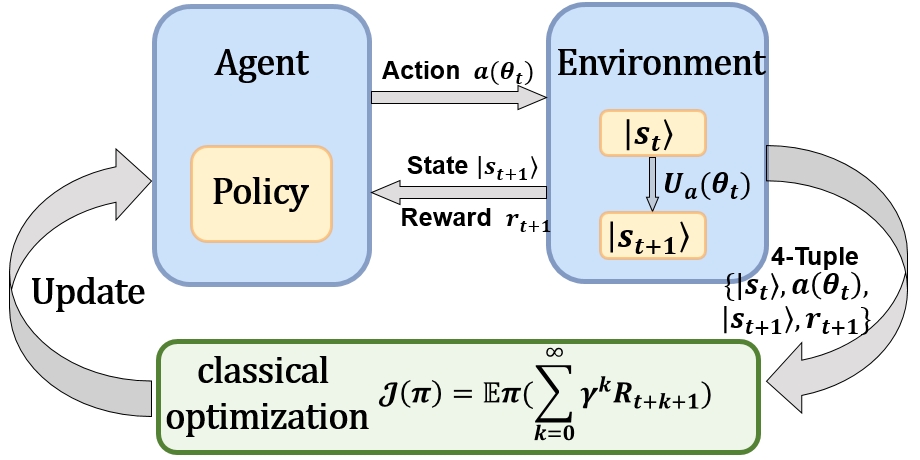

Emerging reinforcement learning techniques that use deep neural networks show considerable promise in control optimization. These methods leverage non-local regularities of noisy control trajectories and facilitate transfer learning between tasks. To harness these powerful capabilities for quantum control optimization, a new control framework has been proposed. This framework aims to simultaneously optimize the speed and fidelity of quantum computation, while also mitigating leakage and stochastic control errors. Specifically, for a broad family of two-qubit unitary gates crucial for quantum simulation of many-electron systems, the control robustness is enhanced by introducing control noise into the training environments for reinforcement learning agents. These agents are trained using trusted-region-policy-optimization. The resulting control solutions demonstrate a two-order-of-magnitude reduction in average-gate-error compared to baseline stochastic-gradient-descent solutions. Additionally, they achieve up to a one-order-of-magnitude reduction in gate time when compared to optimal gate synthesis counterparts.

Links

References / Inspired By

- Richard S. Sutton, Andrew G. BartoMIT Press (2018)This seminal textbook is the standard reference for reinforcement learning. It presents the conceptual framework and core algorithms of RL, including dynamic programming, Monte Carlo methods, temporal-difference learning, and policy gradient techniques, and discusses extensions to function approximation and modern deep RL.

- Murphy Yuezhen Niu, Sergio Boixo, Vadim Smelyanskiy, Hartmut NevenPhysical Review A (2018) • arXiv:1803.01857This work proposes a deep reinforcement learning framework for universal quantum control, optimizing both gate fidelity and execution time under realistic noise and leakage. By incorporating control noise during training, the approach achieves substantial reductions in gate error and duration compared to traditional gradient-based quantum control methods.

- Marin Bukov, Alexandre G. R. Day, Dries Sels, Phillip Weinberg, Anatoli Polkovnikov, Pankaj MehtaPhysical Review X (2018) • arXiv:1705.00565This work applies reinforcement learning (RL) algorithms to the task of quantum state preparation in nonintegrable many-body systems. The authors demonstrate that RL can find short, high-fidelity control protocols that rival optimal control methods. They also identify a spin-glass-like phase transition in the space of control protocols as a function of protocol duration, revealing complex structure in the quantum control landscape and highlighting the potential of RL for out-of-equilibrium quantum physics tasks.